I am wrapping up a profitability analysis project for a global medical manufacturing company. The goal was to give the management team a Business Intelligence application to show profitability margins for customers, products, geographic locations, and production facilities. The solution provided OLAP reporting on profitability metrics by while supporting multiple currency conversions for every currency the company did business with. To deliver this solution I had to pull financial measures like revenue and expenses as well as statistics on the manufacturing process from Oracle’s JD Edwards Enterprise One.

The idea for this project was to use SQL Server 2012 and use SSIS for the ETL process and then SSAS for the cube. The users would be using Excel 2012 to connect to the cube directly. I am happy to report that I did not run across any issues related to using SQL Server 2012 even though I was using Release Candidate 3 for most of the development phase.

I came across a few things during the SSIS development phase of this project that I wanted to pass along to others business intelligence folks who may be pulling from JD Edwards / Oracle database.

1. Configure 34bit and 64bit OLE DB Oracle providers following

Greg Galloway instructions. These instructions are fantastic. I can appreciate these instructions as I remember the wailing and gnashing of teeth that took place the first time I had to setup Oracle’s OLE DB providers on a 64bit server.

2. Decide on using linked server (TSQL) or OLEDB Source (PL/SQL). My experience was that I got noticeably faster performance when using the OLEDB Source with PL/SQL rather than using the more convenient linked server.

3. Use existing date and time conversions.

Bryant Avey had two useful TSQL functions. One converts the JD Edwards’s date fields, which are represented in Julian date format of CYYDDD where C = Century; YY = a 2 digit year and DD = the 3 digit number representing the day of the year (1 through 365 or 366 days on a leap year) to a Gregorian date. The function was called

DateJ2G

CREATE FUNCTION [dbo].[DateJ2G]

(

@JDEDATE int, @FORMAT int

)

RETURNS varchar(20) AS

--Written by Bryant Avey, InterNuntius, Inc.

--Provided free "As Is" with no warranties or guarantees

--I just ask that you keep these comments in the function, if you use it.

--The complete article describing this function can be found at:

--http://wp.me/pBPqA-a

--This function takes a JDE Julian Date and returns

--a varchar date in the format style you specify

--To us simply pass in the JDE date and the style code

--Style codes can be found at

--For Example: select dbo.DateJ2G(sddgj,101) from f4211

--would return the JDE date in the format of 02/29/2008.

--Select dbo.DateJ2G(108060, 1) = 02/29/08

--Select dbo.DateJ2G(109060, 107) = Mar 01, 2009

--Format codes are standard SQL 2005 Date Convert codes.

--Conversion codes can be found here: http://wp.me/pBPqA-a

BEGIN

DECLARE @sqldate datetime

set @sqldate =

dateadd(day,cast((1900000 + @JDEDATE)%1000 as int)-1,(cast((

cast((1900000 + @JDEDATE)/1000 as varchar(4)) + '-01-01')

as datetime)))

RETURN (convert(varchar(20),@sqldate,@FORMAT))

END

The other function, DateG2J, converts a Gregorian date to a Julian Date. Again this was very useful.

CREATE FUNCTION [dbo].[DateG2J] (@Geogian_in datetime)

RETURNS int AS

--Written by Bryant Avey, InterNuntius, Inc.

--Provided free "As Is" with no warranties or guarantees

--I just ask that you keep these comments in the function, if you use it.

--The complete article describing this function can be found at:

--http://wp.me/pBPqA-a

--This function takes a varchar gregorian date and returns

--a Julian JDE Date

--To use simply pass in the string date

--For Example: select dbo.DateG2J('02/29/2008')

--would return the JDE integer date of 108060.

--Date input formats are standard SQL 2005 DateTime values.

--Any validly formated date string will work such as 'feb 29,2008' to get 108060.

BEGIN

declare @JulianDate_out INT

declare @Century INT

declare @YY INT

declare @DayofYear INT

Select @Century = case when datepart(yyyy,@Geogian_in) > 2000

then 100000 else 0 end

Select @YY = CAST((SUBSTRING(CAST(DATEPART(YYYY, @Geogian_in)

AS VARCHAR(4)), 3, 2)) AS INT)

select @DayOfYear = datepart(dayofyear, @Geogian_in)

SELECT @JulianDate_out = @Century + @YY * 1000 + @DayofYear

RETURN(@JulianDate_out)

END

I created a third function to validate and format the JD Edward’s time fields into a valid time field for SQL Server. My experience is that JD Edwards allows any combination of integers that may have not necessarily represented a valid time in SQL Server (or anywhere else for that matter). The

ValidateJDETime function helped deal with this by checking the time and setting it to midnight if an invalid time was found.

CREATE FUNCTION [dbo].[ValidateJDETime] (@IN_Time VARCHAR(10) )

RETURNS CHAR(8)

AS

--Written by Thomas M. Puch

--Provided free "As Is" with no warranties or guarantees

--I just ask that you keep these comments in the function, if you use it.

----Usage: SELECT dbo.ValidateJDETime ( '112233')

BEGIN

DECLARE @RV CHAR(8),

@vt AS CHAR(6),

@vh AS CHAR(2),

@vm AS CHAR(2),

@vs AS CHAR(2)

SET @vt = REPLACE(STR(@IN_Time, 6), SPACE(1), '0')

SET @vh = SUBSTRING(@vt, 1,2)

SET @vm = SUBSTRING(@vt, 3,2)

SET @vs = SUBSTRING(@vt, 5,2)

IF (@vh BETWEEN 0 AND 23 AND

@vm BETWEEN 0 AND 59 AND

@vs BETWEEN 0 AND 59)

BEGIN

SET @RV = @vh + ':' + @vm + ':' + @vs

END

ELSE

BEGIN

SET @RV = '00:00:00'

END

RETURN(@RV)

END

4. Planning to deal with NCHAR and NVARCHAR2 is something you will want to do early on . Most text fields in JD Edwards are either NCHAR or NVARCHAR2 that are padded with trailing or in some cases leading spaces. Trailing or leading spaces need to be accounted for when matching up to other data using the SSIS Lookup transformation especially if you are joining to data from text files or other source systems. It is likely that the other data sources will not have trailing or leading spaces. This will cause the SSIS Lookup transformations to not find a match in SSIS. This can be time consuming to troubleshoot because at first look the data seems to match and even joins together in a TSQL query. I would suggest using the TRIM function in Oracle on every text field in your PL/SQL query to JD Edwards. This function will remove trailing and leading spaces and will allow your SSIS Lookup Transformations to find matches.

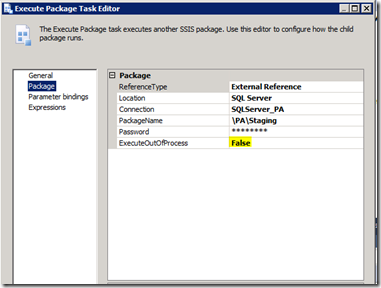

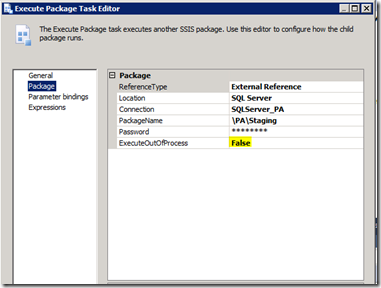

5. When using the Oracle OLE DB provider I found that the property called

ExecuteOutOfProcess, found in the Execute Package Task had to be set to

FALSE if the sub-package used the Oracle OLE DB provider. This was also pointed out in

Greg Galloway’s instructions. Like Greg, I did not really investigate why, but set the configuration and moved on.